Built for real-world AI systems

Persistent memory infrastructure for AI agents—from Memory Spaces and Hive Mode to ACID conversations and vector search. Everything your agents need to remember and coordinate.

Read the docsOne API orchestrates the entire stack

cortex.memory.* automatically coordinates across ACID, Vector, Facts, and Graph. You call one method, all layers sync automatically.

Immutable Source

Append-only conversations. Never modified, kept forever. Perfect audit trail.

- • Conversations (memorySpace)

- • Immutable KB (shared)

- • Mutable data (shared)

Searchable Index

Fast semantic search with embeddings. Links to ACID via conversationRef.

- • Embeddings (any dimension)

- • Semantic search

- • Versioned (retention rules)

Extracted Knowledge

LLM-extracted structured facts. 60-90% storage savings, infinite context.

- • Fact extraction

- • Triple store (S-P-O)

- • Graph sync ready

Single Interface

cortex.memory.* orchestrates ALL layers automatically—ACID, Vector, Facts, Graph. One API, complete automation.

- • Auto layer coordination

- • Graph sync included

- • Type-safe & tested

Everything your AI needs to remember

From simple user preferences to complex multi-agent workflows. Cortex handles it all with enterprise-grade reliability.

Single API Layer

One unified cortex.memory.* interface for all operations. Simple, intuitive, and powerful.

Conversation Layer

Immutable ACID conversations with mutable sub-layer for preferences and profiles. Full history preservation.

Vector Index Layer

Fast semantic search with embedding support. Query millions of messages in milliseconds.

Facts Layer

LLM-powered extraction with 60-90% storage savings. Transform conversations into structured knowledge.

Graph Layer Integration

Ties relationships across all layers. Entity tracking, multi-hop traversal, and knowledge graphs.

GDPR Cascade Delete

One-click deletion across all layers with complete audit trail. Built-in compliance and governance.

Streaming Support

Native edge runtime compatibility. Works with Vercel AI SDK, OpenAI SDK, and LangChain.

MCP Server

Built-in Model Context Protocol server. Works with Cursor, Claude Desktop, and custom MCP clients.

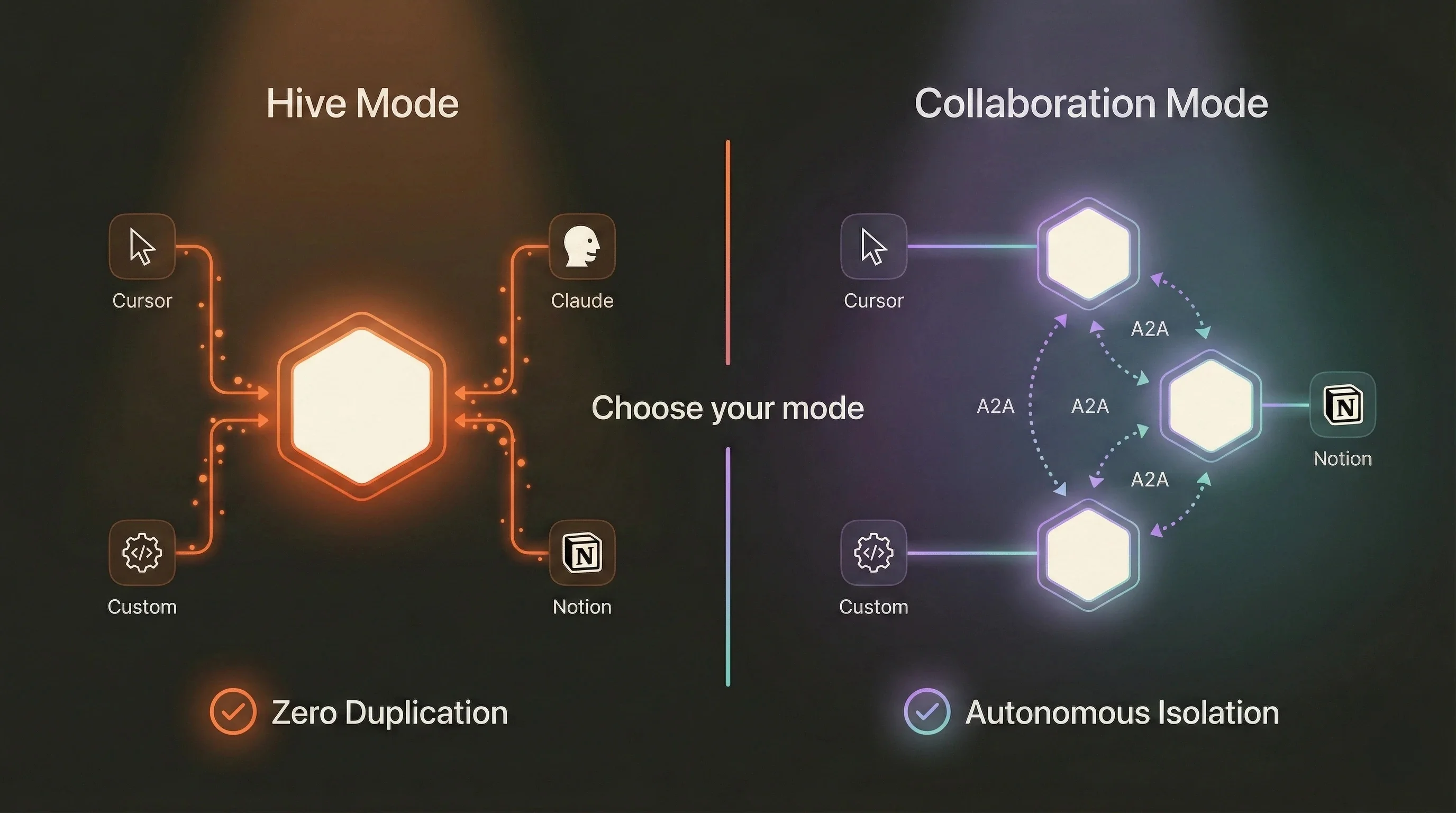

Hive vs Collaboration

Hive Mode for shared memory (MCP, personal AI). Collaboration Mode for autonomous multi-agent systems.

One unified cortex.memory.* interface for all operations. Simple, intuitive, and powerful.

Immutable ACID conversations with mutable sub-layer for preferences and profiles. Full history preservation.

Fast semantic search with embedding support. Query millions of messages in milliseconds.

LLM-powered extraction with 60-90% storage savings. Transform conversations into structured knowledge.

Ties relationships across all layers. Entity tracking, multi-hop traversal, and knowledge graphs.

One-click deletion across all layers with complete audit trail. Built-in compliance and governance.

Native edge runtime compatibility. Works with Vercel AI SDK, OpenAI SDK, and LangChain.

Built-in Model Context Protocol server. Works with Cursor, Claude Desktop, and custom MCP clients.

Hive Mode for shared memory (MCP, personal AI). Collaboration Mode for autonomous multi-agent systems.

Built for real-world applications

From personal AI assistants to enterprise multi-agent systems, Cortex scales with your needs.

Chatbots & AI Assistants

Remember user preferences and conversation history across unlimited sessions.

- User context preservation

- Preference recall

- Session continuity

Multi-Agent Systems

Coordinate between specialized agents with context chains and hive mode.

- Agent coordination

- Shared memory spaces

- A2A communication

RAG Pipelines

Store and retrieve relevant context for LLM prompts with semantic search.

- Semantic retrieval

- Context injection

- 99% token reduction

Enterprise Support

Maintain customer context across interactions with GDPR compliance.

- Customer history

- Cascade deletion

- Audit trails

Personal AI Tools

MCP integration for memory that follows you everywhere—Cursor, Claude, custom.

- Cross-app memory

- Zero duplication

- MCP protocol

Knowledge Management

Organizational memory across teams with graph database integration.

- Team workspaces

- Graph queries

- Knowledge graphs

Two modes. One powerful system.

Choose between shared memory spaces (Hive Mode) or isolated spaces (Collaboration Mode) based on your use case.

Hive Mode

Multiple agents share one memorySpace

- Zero duplication—one memory serves all agents

- Perfect for MCP cross-application memory

- Instant consistency across all tools

- Single write, everyone benefits

Perfect for:

Personal AI tools • Team workspaces • MCP integration

Collaboration Mode

Each agent has separate memorySpace

- Complete isolation—prevents memory poisoning

- Autonomous agents with independent memory

- Secure cross-space access via Context Chains

- A2A communication with audit trails

Perfect for:

Autonomous swarms • Enterprise workflows • Compliance

Never run out of context again

Recall from millions of past messages via semantic search. Up to 99% token reduction compared to traditional context accumulation.

Unlimited Recall

Access millions of memories from any point in history via semantic search

99% Savings

Token reduction through fact extraction means infinite context fits in finite windows

<100ms

Retrieve relevant memories from massive datasets with sub-second latency

import { Cortex } from '@cortexmemory/sdk'

// Initialize with Convex

const cortex = new Cortex({

convexUrl: process.env.CONVEX_URL!

})

// Store with streaming (v0.9.0+)

const result = await cortex.memory.rememberStream({

memorySpaceId: "user-123-personal",

conversationId: "conv-1",

userMessage: "What are best practices?",

responseStream: stream, // Vercel AI SDK

userId: "user-123",

userName: "Alex",

extractFacts: true // Auto fact extraction

})

// Search across millions of memories

const memories = await cortex.memory.search(

"user-123-personal",

"coding preferences",

{ enrichConversation: true }

)

// 99% token reduction via semantic retrievalSimple API.

Powerful architecture.

Built with developer experience in mind. Get started in minutes with the Cortex CLI, scale to millions of memories with enterprise-grade reliability.

- One API orchestrates all layers (ACID + Vector + Facts + Graph)

- Infinite context via semantic search (99% token savings)

- Hive Mode or Collaboration Mode for multi-agent systems

- Streaming support (ReadableStream & AsyncIterable)

- Optional graph database (Neo4j/Memgraph auto-sync)

- Automatic layer coordination—no manual management

- GDPR cascade deletion with complete audit trails

- Framework-agnostic (LangChain, Vercel AI, custom)

- Embedding-agnostic (OpenAI, Cohere, local models)

- Real-time sync via Convex reactive queries

Works with your favorite tools

Framework-agnostic, LLM-agnostic, embedding-agnostic. Built for flexibility.